Kim Asendorf on Breaking His Own Rules

.png)

Kim Asendorf on Breaking His Own Rules

Kim Asendorf's PXL POD, the follow-up to PXL DEX (2025), consists of 256 on-chain pieces built around a cylindrical form and an expanded pixel ecosystem. PXL POD extends the protocols introduced in its predecessor while breaking its own rules. The artist speaks with Peter Bauman (Monk Antony) about sequels and liberation, the aesthetics of isolation and the allure of vibe coding. A special version of the work will appear as PXL DUO POD at Art Basel Hong Kong's Zero 10 with Nguyen Wahed in March 2026.

Peter Bauman: PXL DEX (2025) pushed your aesthetic into the third dimension. What is PXL POD doing aesthetically with its rotating form? In what ways is it in dialogue with its predecessor and where does it depart?

Kim Asendorf: PXL POD is a bit inspired by these office pods that you have in these large rooms. They’re for when you need silent spaces, where you get into these capsules or pods with one or two people or benches in them so that you can work isolated or take calls to be in a quiet space.

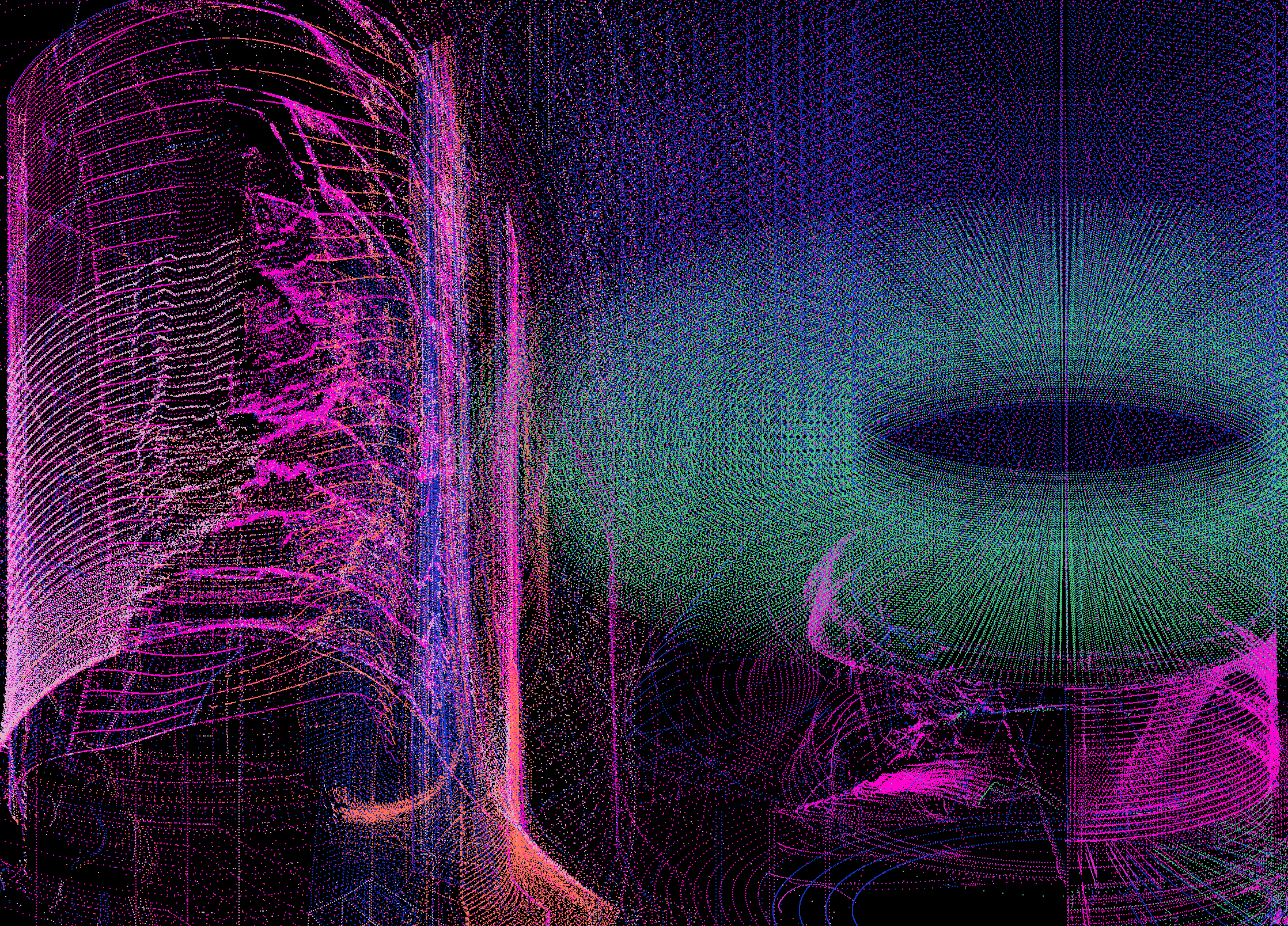

It's not about exactly the shape; it's more about what these pods do. They isolate. Also with PXL DEX, there was this urge to work more in empty space. It's three-dimensional, even if it's just a black canvas. As soon as you add some three-dimensional elements to it, the black space becomes infinite.

I wanted the atmosphere of isolation, like being in outer space. You can't even hear anything anymore because there is no air to transport sound.

PXL DEX was a cube, which has clear X, Y, Z directional coordinates where you can move around. In a cylinder that changes. Of course, you have the same coordinates but that does not fit with the shape anymore. So all the movements needed to be translated into a radial system. You need to adjust everything to a center axis and the angle and distance to the center axis to create movement that feels right. That is not just my opinion; that is simply how our brain works. You cannot have something cylindric and treat it like a cube.

When I changed the shape from a cube to this cylinder, I could not reuse a lot of the algorithm. That was a good thing because if everything had turned out immediately great, I would have almost used the same code. That forced me to really rethink and rewrite all 16 animation algorithms that are within the shader. So you could say, a bit simply, that the PXL DEX has been just bent into a circle. Of course that's not true.

I was really finding something new without being able to rely on something that has already been created within PXL DEX. I was quite happy because both works feel absolutely from the same world. They really belong together but are separated enough to be accepted by myself as their own thing.

Peter Bauman: Beyond aesthetics, how does PXL POD extend the conceptual territory of PXL DEX, such as its engagement with protocols?

Kim Asendorf: With PXL DEX, it was a bit more provocative in a way that the title also wanted to show that there were tokens involved. For PXL POD, it's a bit more free, like a sequel.

The idea here is really to prove the point that the PXL token, which is already out and used in PXL DEX, can now be used in the second work.

Theoretically, you can mint the PXL POD without pixels and use your pixels from your PXL DEX or wherever you got some and put them in. It's an ecosystem that is growing. The collections have similar traits or aesthetics and belong together but can also stand on their own.

The main achievement for me here was to try to keep up with the aesthetic and be able to create something that is visually not just a sequel. Ideally, the work is good if people say it's better than PXL DEX.

That is, of course, a challenge because it's very difficult to make a sequel. You cannot compare to PXL DEX, where you had an additional minting allowance for pixels of 500,000 pixels that were reserved. So that is different with PXL POD. There's the option to mint zero and up to a million pixels with the NFT in one transaction.

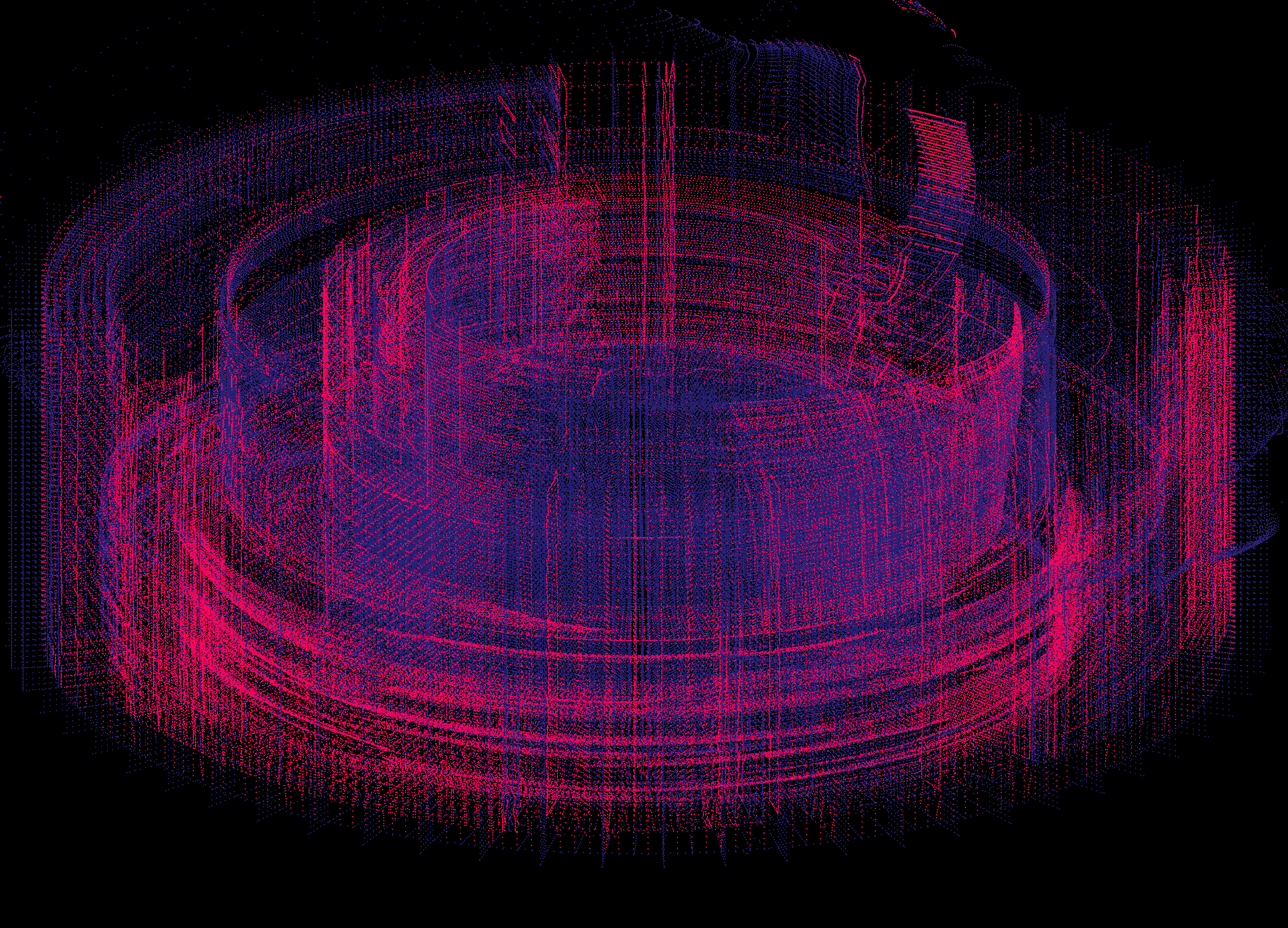

Another thing I wanted to explore and basically I saw that when I was watching the PXL POD and had it open in two browser windows next to each other. I had a feeling that together it exposed some synergy between them. That can also, of course, happen with a lot of generative artworks. If you put two outputs next to each other, then it can greatly change your perception of a single output. So I created a Duo Pod version within the collection, which is what will be shown at Art Basel Hong Kong.

Peter Bauman: We spoke about minting as participation with PXL DEX. How does that carry over into PXL POD?

For the mint, you need the PXL DEX in your wallet to prove that you are the owner. There's no delegation, there's no allow list or anything like this in a classic sense. I don't collect any addresses.

After minting, the PXL POD smart contract gets marked as used. So with one PXL DEX, you can only mint one PXL POD. That felt like a very simple mechanic and it's maybe not the most comfortable for the user but it feels straightforward and can be validated on the blockchain.

I may be forcing people to open their vault wallet and get the token out again just to mint. It's an interesting mechanic and I’m curious how it might affect the experience. Every transaction, especially something valuable, comes with intense emotions, at least for me or I think for many other people as well. You get a little nervous or a lot, depending on what's going on. So I think that it should be part of the experience.

You should feel something. I want people to feel something. You should feel a risk to participate.

Peter Bauman: You've been posting images on Twitter from early February showing how the work changes as more pixels are added in various states of fullness. Is there an ideal density to you aesthetically? A point where the work feels complete?

Kim Asendorf: Social media is always just a playground when you work with real-time animations. The render quality is a core reason why real-time animations can make so much sense. You can render something that cannot be captured in a movie. Making movie replicas for social media is already a huge hassle; you cannot really show the actual work.

Social media feels like a very low-fi, trashy version of what the actual work is.

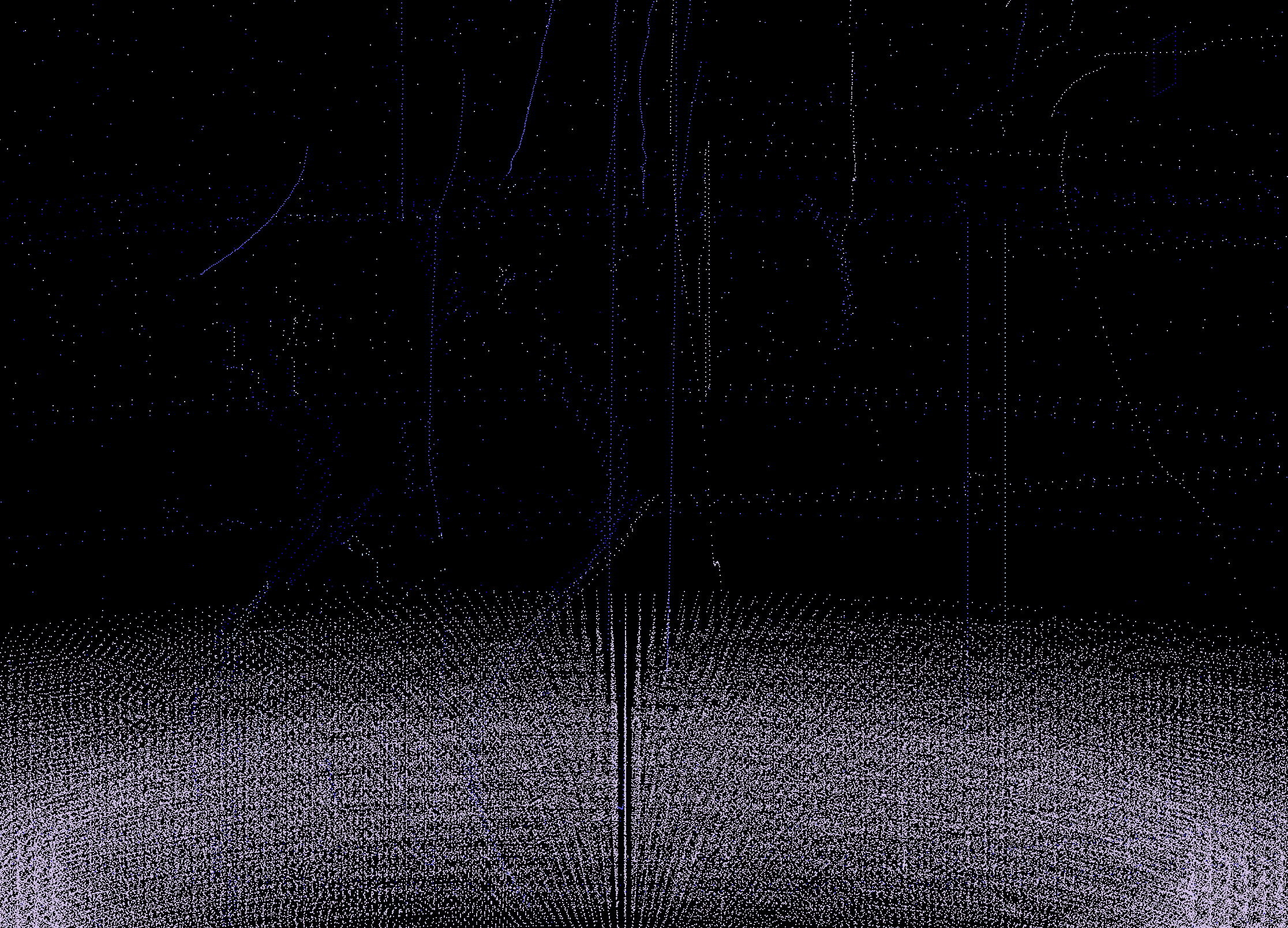

Besides that, I just wanted to play around a bit with it. It feels like revealing more over time. When I just show you 1,000 pixels, you can barely recognize the shape. To really get the boundaries of the shape, you need more pixels.

It's more or less a reveal mechanic, making the structure and complexity visible step by step.

Peter Bauman: How do you see the broader PXL ecosystem continuing to evolve? And how did you approach color with PXL POD after the constraints you set for PXL DEX?

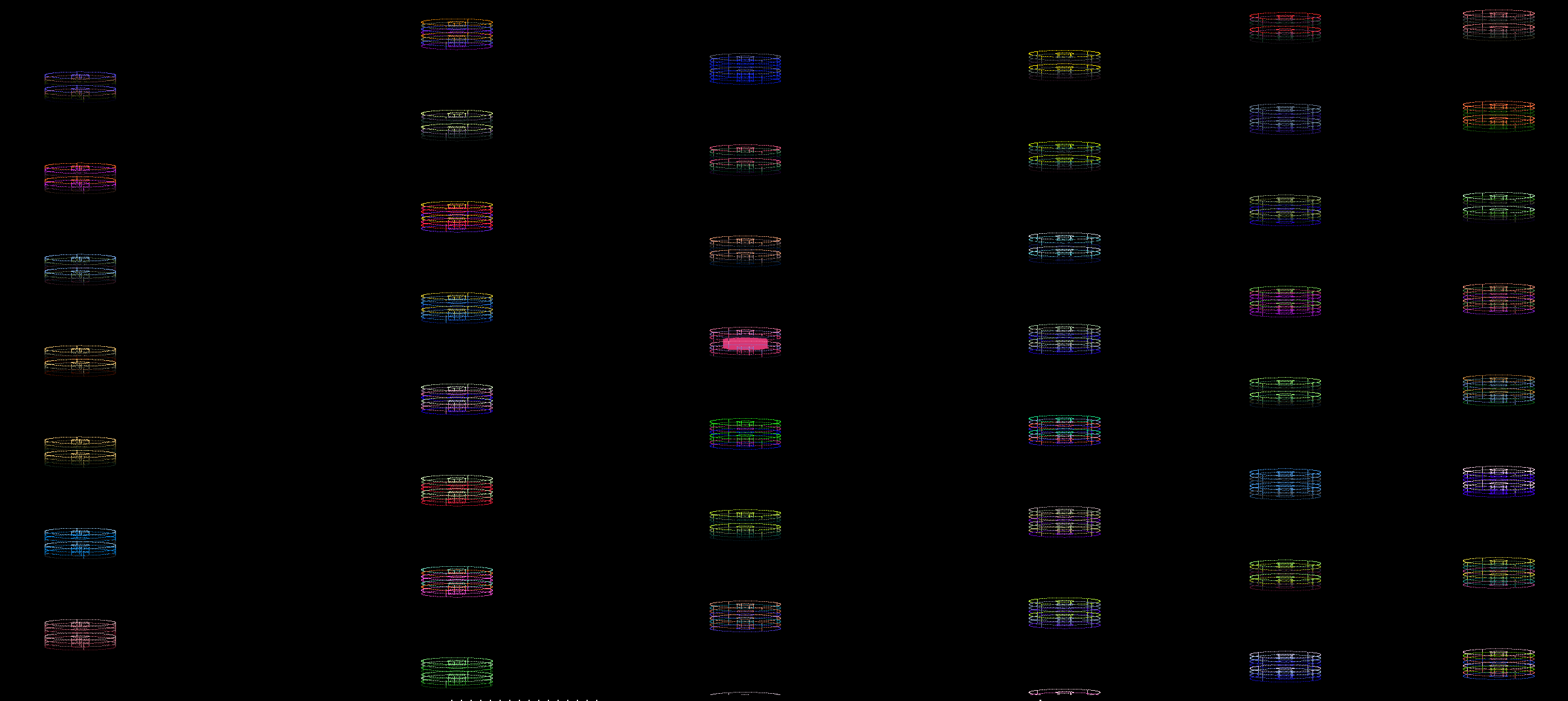

Kim Asendorf: The color palettes on PXL DEX were all handpicked after I generated random color palettes. They were obviously not completely randomly generated. I used different strategies to generate these color palettes to create about five hundred. Then I stripped it down again to two hundred fifty-six. With PXL DEX, there were always two color pairs. These color pairs were also rendered in a gradient.

So there were four colors (two pairs) and one pair was eventually rendered as a gradient. Let's say you have red and green with blue and white. It means one will always be a gradient, like red-green. The red and green do not blend with the blue and white. So these two color pairs are separated.

With PXL POD, I took a similar route. I also generated many color palettes and manually selected them. But here we have four colors that are completely independent. So it's a four-color palette. But no gradient rendering is happening. So the difference here is the color aesthetic is more stable. But there's also more complexity because for PXL DEX, I limited the colors to certain values. You only had three steps for each color block, red, green and blue, which extremely limits the total number of colors.

With PXL POD, it's completely free. There's no limitation. There could be four different shades of blue on one color palette. For me, it was very liberating to simplify the rules.

I think that makes the collection a little more diverse in terms of palettes; I could go into some darker corners. I wanted to have some parts feel dark, even melancholic, as opposed to everything being happy bright colors. The whole collection is sorted by brightness. Number one is the least bright token and so on.

I intervened again with the colors because at some point I added some completely manual color palettes because I wanted it to not be restricted to only randomly generated palettes. I felt I could do more.

I had more freedom and I think that was only possible because it's a sequel. I could break some rules from the first edition and even feel a bit, you know, rebellious.

The color palettes are again completely stored onchain as with the whole code and all the pixel interactions. The website that I have running is an interface but everything—the whole code, the artwork—is completely integrated in the smart contract and could be used without a website if you used Etherscan or any other access point to the Ethereum network.

Peter Bauman: You've now shown new work at both of Art Basel's first two Zero 10 editions. What exactly will you be showing at Art Basel Hong Kong?

Kim Asendorf: The Dual Pods I mentioned earlier will be showing in Hong Kong. There will be a two-by-one dimension LED screen so you can have these two pods next to each other. The idea is to make it interactive, not with camera tracking or anything sensoric, but with a trackpad or touchpad. You'll be able to get this control pad in your hand and interact with controls that are almost identical to PXL DEX: you can rotate, zoom in, change camera angles.

Peter Bauman: As someone who’s been engaging with computation for decades, do you see it shifting from hard programming toward models? How are you responding to that transition in your practice? How are you using those tools yourself?

Does the rise of vibe coding and agents concern you given what you've said to me before about the importance of the artist doing the work themselves?

Kim Asendorf: If you are a programmer in any field, you will be interested in AI as a tool to support you. Everything else just doesn't make sense. The programming can be really intense depending on the project size and complexity. So for me, it's quite interesting.

I haven't been on Stack Overflow in months, which is something I couldn't have imagined a few years ago. That's, of course, a game changer.

It was like the go-to page for any questions. Now you can solve them directly within the IDE.

On the side, I'm running two projects where I use AI. One is based on a hardware synthesizer where I used AI to decode the SysEx file, which is basically all the patches and the programming. It’s the database of the synthesizer. However, decoding it with AI was a nightmare. The AI that we have access to is far away from writing something like Photoshop.

People write on Twitter, “Soon everybody can write their own programs.” I think no; we’re still years away from that complexity level. It’s especially useful for simple tasks or every task that can be contained within one module. It can make optimizations in one module without knowing anything about the rest of the program. That's where it's strong already.

I have another project that’s a techno music generator and that level of complexity is quite intense. It is very interesting to see how Claude is dancing around the code base. I vibe coded a lot of it because I wanted to explore a different setup that I haven't used before. I basically coded everything in Rust while having no knowledge of Rust.

Meanwhile, I learned a lot of Rust through working on it. Like I said, you cannot just prompt and your code will go in the right direction. You constantly need to supervise, clean up, have tasks redone.

Because AI is not only lazy, it's also lying to you constantly. It's pretending to have done things that it hasn't done.

It's also very delicate to work with sound. Some things are not clearly audible for you. It takes hours to work through an audio stream mentally. It's really interesting how much I've been lied to, how much shitty code I've been served and needed to delete basically.

AI has little short-term memory and especially no long-term memory. So it always needs to research its own code to keep building. And that takes away, I would say, at least 50% of the energy. It's just constantly rereading what it already wrote itself. It's basically in a mindless loop.

That's the biggest problem to solve. How can AI remember like a human does?

I think it's super helpful. It saves a lot of time. It's very cool to learn through it. I doubt somebody without any knowledge can easily start with it. There are a few people I know, who spent months getting into it and now they’ve become a master even if they couldn’t code before. So if you are really into it, there is now a new entrance.

Before, coding took you five years to learn; now you can learn it in six months.

I could definitely imagine going similar routes in the future again, learning something new instead of relying on something you already know just because of the learning curve in front of you.

That changed a lot. So the curve is not as steep anymore. And yes, that's great.

Peter Bauman: Certain programming tasks can be replaced but people that have, like you mentioned, a certain level of programming knowledge have a huge advantage.

Kim Asendorf: Absolutely.

Peter Bauman: It’s like a superpower; it usually comes from something poisonous or toxic but you can still use it for good. For coders and artists, it seems like it can supercharge what they already do.

Kim Asendorf: I would have been lost so many times if I didn’t already know a lot about programming. If you never look at the code, I'm certain you will end up with a lot of dead and unused code that you really don’t need. Maybe that doesn't matter anymore. For me as a programmer with some expectations about syntax, styling and organization, it's a bit painful as well.

Peter Bauman: There’s similar pain with a writer reading its text.

Kim Asendorf: But sometimes as a coder I can get a task done in ten minutes that would have taken two days before. So I won't complain.

I'm very curious what it will change because if we rely too much on AI, we are just remixing mostly and that has already been done anyways before AI with postmodernism. So I hope it leads to some kind of liberation. Maybe it can be the counterforce to social media, which is the opposite of freedom. We thought it liberated us and gave us free speech or whatever but it's just wildly addictive.

The question is, does AI lead to higher quality or just to something broader?

It enables artists to make more complex things than they can now do alone. But that does not make things better as an artwork. Most often, it's the simplicity of something that strikes you. At some point, complexity becomes incomprehensible. You have two complex things and they are basically the same because they are so complex that I don't see a difference anymore.

------

Kim Asendorf is a German visual artist who employs a fusion of experimental and conceptual strategies to craft abstract animations, images and sculptures.

Peter Bauman (Monk Antony) is Le Random's editor in chief.